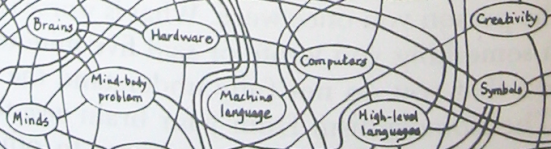

The Semantic Web is a network of interconnected information.

People can publish details of social and events networks; wireless access points can broadcast their location to machines; crawlers collect information and use it, with rules about inference or translation, to create new pieces of proposed knowledge.

We have worked towards republishing information physically near its site of origin and relevance. Envisioning an information topography which comes to resemble a network topography: spread out, discontinous, without centre and with many centres.

Knowledge representation is an ancient activity; we trace our tradition back to the work of Ramon Lull, a mystic writing in the thirteenth century.[0],[1]

Christopher Alexander is an inspiration in this area; his group worked in the 60s on a people-centred, process based, "Pattern Language" to describe spatial situations and problems.

His thinking was taken up by a group of software theorists in the early 1990's, who produced a book of "Design Patterns" for the relatively new-fangled object-oriented style of programming.

Patterns have often concretised at a very low level and become methodologies; Alexander wrote:

But as things are, we have so far beset ourselves with rules, and concepts, and ideas of what must be done to make a program or a network alive, that we have become afraid of what will happen naturally, and convinced that we must work within a "system", and with "methods", since without them our surroundings will come tumbling down in chaos.

We can model our small world, our bit of the city; potentially, obtain land use maps (for a price!), directories of stakeholders in spatial services...

We are built on networks, and their inputs and outputs into smaller systems, and if data can be gathered from all the nodes, and the systematic effects be analysed, then perhaps optimisations can be suggested, infrastructure improvements be stimulated.

The oyster card tracking system implemented on London transport networks now has some of this nature; it measures with a very simple set of inputs, the movement of travellers when they make payment (and other times their RFID card may be scanned). Usage pattern analysis may show where vital improvements are most urgently needed.

Most of us are dependent on the National Grid for electricity service provision. Some people, by investment in solar and other alternative energy sources, generate enough to sell back to the National Grid.

When you move and travel, when you communicate wirelessly, when you purchase things to fulfil basic needs, you are generating a wash of information, you are excreting data. (You seldom have access to, let alone ownership of, most of it.) This is at a few places and interfaces where observations can be made and recorded, and messages distributed.

How about harnessing the negentropy of the information we all generate? Of being able to run a kind of "personal proxy" that makes a copy of all the information in-the-world that is accessible at the time that information leaves me.

Messaging information about systems and about agents reacting with them needs to be available, and updated, right at the point of its production; that needs to disseminate at a point of distribution. The system we have built for wirelesslondon is very simple; each node only has to be able to tell its MAC address, its twelve-digit network interface identity; and it receives an updated list of local information, once the node owner, a human, has associated that address with a co-ordinate location.

We have noticed wireless buses, emitting "beacon frames", in transit down Commercial Road. If we can network together our noticings - share data and analysis of patterns - perhaps we can build schedulers that are more responsive to usage trends and problems. Perhaps we can build some cheap informative bus stop display, really just a programmable poster, including more than scheduling information...?

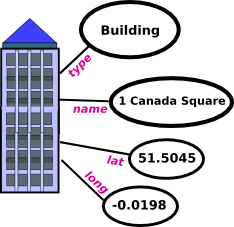

The semantic web is like cartoon knowledge representation. "Here is a building, and it has these known properties, that might be useful to others". We can get much more depth of data, though; access to machine-readable plans, rather than human-readable scans of planning permission applications and property maps allows us to connect our map and our model up to a lot of information about land use planning.

The semweb is built on small specialist vocabularies, or ontologies; there is a logical schema language which is used to express equivalences between them, that can be used in a kind of machine translation / reasoning process.

This may sound a bit academic, but it has plenty of real-world applications; one of the trendiest is in syndicating blogs, and shared directories of pictures, by tagging them with a spatial location.

Everything can have an RSS feed, from the bus timetable generator, to the bus describing what has happened to it, the community network access point, the local hospital and fire brigade activity levels, a message-broadcaster at the end of any street. Potentially in any object, but then things start to get a little creepy and technocratic.

We want knowledge-in-the-world, but not to be put in an opprobrious position with regards to a device: dependent on it for access to meta-information, or subject through it to a tirade of messages in which i am not interested.

TCP-IP packets keep a counter of how many hops they have been through: a time-to-live number is decremented by one by each router the packet passes through. One has the idea of possibly filtering packets by TTL and giving precedence to those that originate nearby. This would lead to some bleed-off of information at the edges, and could be interestingly combined with a p2p network like bittorrent - data would compete to bring themselves nearer to you.

Our map of the world is subject to error correction. Where we learn new things we can propagate them; when we notice inaccuracies, we can expand upon them.

Patterns in one place that can be noticed, can be amended and directed to different social purposes. One example is the increase in foot traffic and local business when one-way roads are converted back to two-way.

In Bangalore, the road pattern is interesting; between what might be described as "pavement" and as "road" is a dusty area of doubt and uncertainty a metre and some wide. Motorbikes and auto-rickshaws scoot down it, but people walk on it too. Alongside roads where pavements have been built, the dusty ramp is reclaiming the border, and people walk there, rather than on the unyielding, uncomfortable paving stones.

Next to this unborder zone is all activity; small stalls selling paan and tobacco, fried products, transactions. It allows the traffic flow some flexibility - smaller vehicles can escape from near misses. Traffic seems to flow faster, at a more sustained if lower-maximum rate.

In Delhi, the pavements are high; twice as high off the road surface as in any European town, the edge painted with garish yellow and black "keep away" stripes. This small, borderline feature of the system exerts tremendous social and spatial forces around it, in its "influence zone".

The administration of the different networks which power our city - an analogy, i hope an evident and not a tortured one - can be viewed as a programming of the city. Each has their inputs and outputs, and some - like water and electricity, or water and sewage, or transport and housing - are codependent. The calculations for top-down allocation are based on we-don't-know-what criteria, assuming an ongoing set of ecological and technological behaviours.

Aspect Oriented Programming, and the related Design by Contract, offer the idea of a set of legally defined interfaces. As an object in the system, you publish a guarantee about your behaviours and about the information you will provide if you are asked certain things. A guarantee about future behaviour is a description of intent about future actions given some situation. Money operates as a version of such a guarantee: "i promise to pay the bearer the sum of five pounds (in the event that he/she asks for them, and that i happen to have the gold on me)".

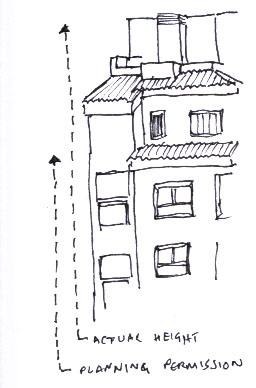

Consider the case in my mother's village in Spain, where a new building has gone up on the sea front a couple of storeys higher than planning permission might suggest. She talked about it with her bank manager, who said, "We gave a loan to fund that building, you know; if we'd known how the structure would impact the town, we would never have funded it."

Drawings and models are provided by CAD and GIS programs; planning applications are connected to agents and applicants, are visible at a certain distance or to a certain constituency... view metadata about these plans and models as a kind of contract. This kind of design by contract can be monitored daily; a sensor would shout out when the building rose over the height described by the planning permission in the model. A message could be sent, in any case; a collection of feeds, about different kinds of building development and planning projects in the area.

A temporal map of planning permissions in Tower Hamlets best seen at worldkit

[ some stuff about agent cities, dumb bots and sensor motes ]

This does not have to be a grand architecture, network wise or otherwise; 'nodes' broadcast information about themselves, or references to that information, over very low power radio, to whoever might care to listen. Atmospheric standards that can interpret the simple data models and rebroadcast them, any free service can plug in and make use of the information.

One outlined future, that the mobile telecommunications providers and the governments that license them appear to be most interested in, is putting sensors on us; monitoring the location of a device and therefore its user and interface ever more accurately; triggering a licensed subset of sensors to talk to us over a national, centrally billed network.

Structured information exchange over local, arbitrarily-run networks, where data can be accessed very locally at high priority and with high density, can provide many infrastructural benefits - warning about negative atmospheric events, re-routing for and planning of traffic, balancing of energy usage and storage. There is no need, in a lot of cases, for this information to be available more widely than locally.

How do we avoid accusations of excessive technocracy, and how to arbitrate around any systematic necessity to build a movement log of a participant? Most of the information flow here is between machines, and most conclusions that the designer is looking to reach, are influenced by the behaviour of crowds, not of individuals. Systems like oyster attempt to extrapolate, or model the former, out of the latter. But sensor networks can just take a snapshot of a crowd, its density; if often doesn't need to know or care about the level of detail that would make images of human faces decipherable.

How to build interfaces into the world, in a way which allows useful and local information to come through, that is low cost and provides a low barrier to entry in terms of user interface?

How to build interfaces into the world, in a way which allows useful and local information to come through, that is low cost and provides a low barrier to entry in terms of user interface?

The state of open source speech recognition is not great, unfortunately. There are alternative feedback interfaces, maybe; the story about the talking sign describes one.

An idea in "landscape architecture" terms is to leave certain areas undefined in use purposes - not overtly owned, or developed - and see how they develop spontaneously. A kind of "planned random" around, particularly, the edges of areas which have been devoted to different kinds of use.

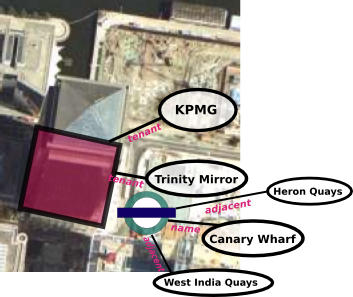

This shows the border between the Canary Wharf, and "Poplar" areas of East London. A motorway of about 8 lanes lies to the left.

In programming, and working with networked information, the presence of a big barrier or monolithic structure tends to provoke a reaction to partition the problem and work round it, replacing its function in the solution, piecemeal.

Especially good is if we can begin to define isolatable subsystems, elements of the network that we can treat as a black box in terms of inputs and outputs. Also, if we can encapsulate the influence zone, if only the major elements of it, of a system or network; the amount of electricity needed to power a water pumping station, and how much water comes back from a sewage treatment facility, and how much clean or grey water that requires to run; the catchment area for water and sewage delivery, and average household usage patterns per area.

We can write rules for the behaviours of these systems, and write protocols as to how they react to each other; what is exchanged, who is involved, and what they can mutually expect in the future as a result of any past interactions.

This can be viewed as a kind of ongoing, micro-negotiated social contract. You are standing on a piece of land. You can see a usage agreement, a kind of license, for the use of this bit of space. You are perhaps entitled to sit here, but not to sell products for a profit, or to sleep here, or to gather more than N people. The licensing authorities can be contacted. Perhaps you need a license to perform a micro broadcasts at certain frequencies. Given a nominal or nuisance fee, a software interface would conduct the licensing application instantaneously.

If your house was a robot, if your bus was a robot, if your table was a robot; is this problematic, as a way of maintaining that you not be a robot?

March 2005