In the revived-tilde mailing list, we had a round of “about me” posts in which I said something about having quit coding (professionally) and starting up an Etsy shop which, I didn’t explain in detail because it woulda sounded like a commercial, but I find I want to justify it as “no, it really is nerdy.” Which it is.

My 3d printer operates on 30-40w rayon, polyester, or cotton filament. It uses proprietary(ish) files but there’s an open-source project that produces them from SVG files so I do most of my CAD work in Inkscape. I got tired of running a USB stick back and forth to it so I hooked up a Pi Zero in USB Gadget mode, though at the moment I haven’t replaced its corrupted SD card so I’m back to the USB stick.

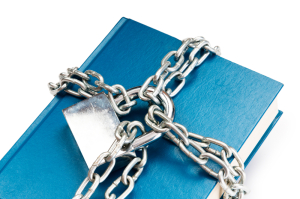

In honor of the revivification of tilde, I ran a custom print:

It’s a mini composition book cover; takes Dollar Tree notebooks (they come in a 3-pack) and closes with a Zebra or G2 size pen. I’ve had a lot of fun making them.

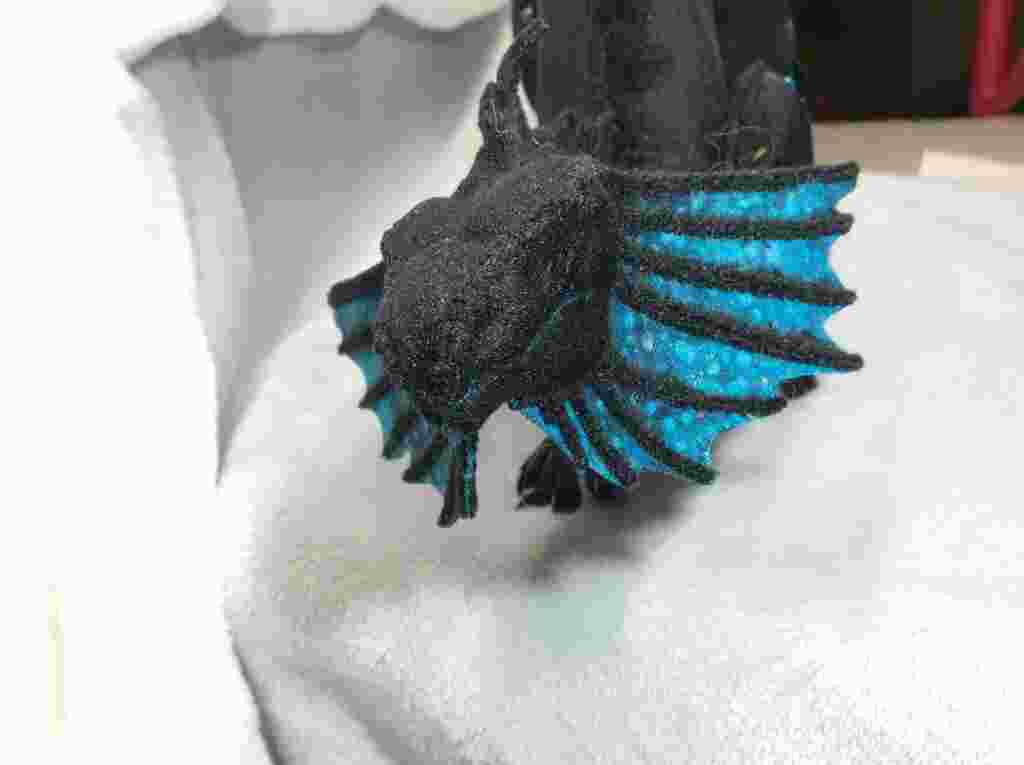

That’s not actually why I bought the printer though; I got it to make dragons. I started small, and somehow these little ones have caught on and that’s most of what I sell on Etsy though not usually in holo-foil.

My biggest challenge, as with most 3d printers, is printing stuff that’s larger than the bed, and that’s what I’ve been doing lately (with a detour to make the tiny critter above).

The print bed is 5x7”, and that dragon is right around 24” long, with an eventual wingspan of a little more than that, so it’s been an interesting challenge. The previous largest print I’ve done is a leafy sea dragon (not to be confused with a leafy seadragon), around 12” tall.

It’s a little more expensive than the usual 3d printer (though nobody pays MSRP of course), and a little harder to use, but I think they stand out from the usual ABS crowd.

(If you want the non-1995-artifact versions of the pictures, you can poke around my sewing blog or my mastodon.art account or, if you don’t mind a silo, my Instagram.